🤖 Tech Talk: Inside the AI frenzy — Massive bets, Murky returns

Plus: ‘AI should remember your life,’ Sam Altman says on OpenAI’s next big bet; Top trends we covered in 2025; Mini apps in Google Gemini; and more...

Dear reader,

As 2025 draws to a close, most of us would agree that artificial intelligence (AI) undoubtedly has dominated conversations, fuelled by both its potential, the rapid maturation of India’s tech ecosystem, fears of job losses, and concerns over an AI bubble due to irrational exuberance.

AI is no longer a niche technology but has become a defining economic and strategic force. The field has moved decisively from classical AI to Generative AI (Gen AI), and now to Agentic AI.

Traditional AI focused on analysing historical data to detect patterns and make predictions. Gen AI changed the game by producing original content such as text, code, images, audio and video, through simple prompts in natural languages such as English and Hindi. That shift went mainstream with the launch of ChatGPT three years ago. Today, OpenAI claims more than a million ChatGPT users, and Sensor Tower estimates Gen AI apps could generate over $10 billion in revenue by 2026.

The next leap is Agentic AI. These systems go beyond content generation to reason, plan, and take autonomous actions on a user’s behalf—nudging AI closer to artificial general intelligence (AGI). Over the past year, Agentic AI has gained rapid traction, including systems that can execute transactions, such as making payments, as OpenAI has demonstrated for users in the US. AI-native browsers—many built on Google’s open-source Chromium engine, including Perplexity’s Comet and Opera’s Neon, alongside newcomers like Dia and Brave—are accelerating this shift.

No company illustrates the scale of the AI boom better than Nvidia. The chipmaker, the biggest beneficiary of AI infrastructure spending, became the world’s first company to reach a $5 trillion market capitalisation in late October. While its current mcap is around $4.6 trillion, forecasts suggest Nvidia’s valuation could climb to $8-10 trillion by the end of the decade, driven by relentless demand for AI computing power.

Nvidia’s rise rests on its dominance in high-performance GPUs—once designed for gaming, now indispensable for training and running AI models. It has also reinvented itself as an AI infrastructure giant, combining hardware with its CUDA software platform and deep data-centre partnerships. Nearly 89% of its revenue now comes from data centres, with gaming and AI PCs contributing about 10%. Nvidia estimates global spending on AI infrastructure to reach $3-4 trillion by 2030.

Globally, Gartner expects AI spending to approach $1.5 trillion in 2025, with a large share flowing into data centres powered by Nvidia GPUs and Google’s tensor processing units (TPUs). India mirrors this trend. IT spending is projected to grow at 10.6% year-on-year to $176 billion in 2026, while data-centre spending is expected to surge 20.5%, outpacing all other segments.

At the core of these facilities are expensive AI chips—an Nvidia H100 can cost up to $40,000—making supply tight and access uneven. Under the IndiaAI Mission, the government has stepped in, procuring 38,000 GPUs and offering them to domestic firms at subsidised rates.

But are Gen AI and Agentic AI delivering returns?

An October Wharton enterprise study found that 75% of large US firms already report ROI from AI, with four in five expecting positive returns within two to three years. Two-thirds are spending more than $5 million annually on Gen AI, and over 10% have budgets exceeding $20 million.

Yet scepticism persists. Gartner warned that by the end of 2025, 30% of Gen AI projects would be abandoned after proof of concept, citing poor data quality, rising costs, weak risk controls, and unclear business value. Greyhound Research’s CIO Pulse 2025 reported that 64% of Indian organisations have not scaled even half of their AI pilots. An MIT study added fuel to the debate, finding that despite $30–40 billion in enterprise GenAI investment, 95% of organisations have seen no measurable returns. Productivity gains, it argued, accrue to individuals, not balance sheets, with projects faltering due to fragile workflows and weak integration into core operations.

These contradictions point to real risks. Aggressive spending could squeeze margins, delay payback, and weaken demand—while any shift in investor sentiment could puncture lofty valuations. Still, the spending shows little sign of slowing.

Critics warn of a “circular investment” loop, where the same players fund, supply, and consume AI infrastructure. In October, OpenAI announced that Nvidia would take an equity stake reportedly worth up to $100 billion while jointly developing massive GPU-powered data centres. Alongside Oracle Corp., OpenAI also unveiled plans for five large facilities under the $500-billion “Stargate” project, expected to deploy hundreds of thousands of Nvidia chips. OpenAI is also reportedly in talks with Amazon for an investment that could exceed $10 billion.

OpenAI is not alone. Meta, Google and other Big Tech firms are accelerating their investments in AI infrastructure at a pace rarely seen before, intensifying both optimism and anxiety.

Does this surge signal the start of an AI bubble, or another AI winter?

Nvidia co-founder and CEO Jensen Huang has dismissed such concerns. Speaking to Bloomberg Television on 29 October, Huang said Nvidia’s latest chips are expected to generate close to half-a-trillion dollars in revenue. “I don’t believe we are in an AI bubble,” he said. “All of these different AI models are being used, and customers are paying for the services.”

That said, while AI agents can write, deploy and debug code at speed, they also hallucinate, overlook basic errors and, at times, disrupt the very systems they are designed to improve. Greater autonomy comes with clear trade-offs. An AI agent may generate code in minutes, but without human judgement the risk of costly failures remains high. Fully autonomous developers cannot yet be trusted. A randomised controlled trial by the Model Evaluation and Threat Research group found developers using AI tools took 19% longer to complete tasks than those working without them. In practice, AI can slow teams down rather than speed them up. Trust, therefore, is critical for enterprise adoption.

Enterprises also need clarity on when to use classical AI, generative AI or agentic AI. The technology should follow the problem, not the other way around. Without this discipline, organisations risk falling into “AI washing”, where inflated claims mask limited real-world impact.

Meanwhile, the infrastructure boom is also creating knock-on effects. Demand for GPUs is driving up power consumption, putting pressure on electricity grids and raising concerns around water use and noise pollution. Data-centre operators are responding by shifting to renewable energy, experimenting with advanced cooling methods and even exploring the idea of space-based data centres. Some of the most creative solutions are emerging in Nordic countries. AI data centres are being built inside Cold War-era bunkers and abandoned granite mines, where naturally cool temperatures reduce energy needs. Excess heat from these facilities is also being recycled. In Espoo, Finland, heat generated by Microsoft data centres now supplies hot water to radiators serving roughly 40,000 residents.

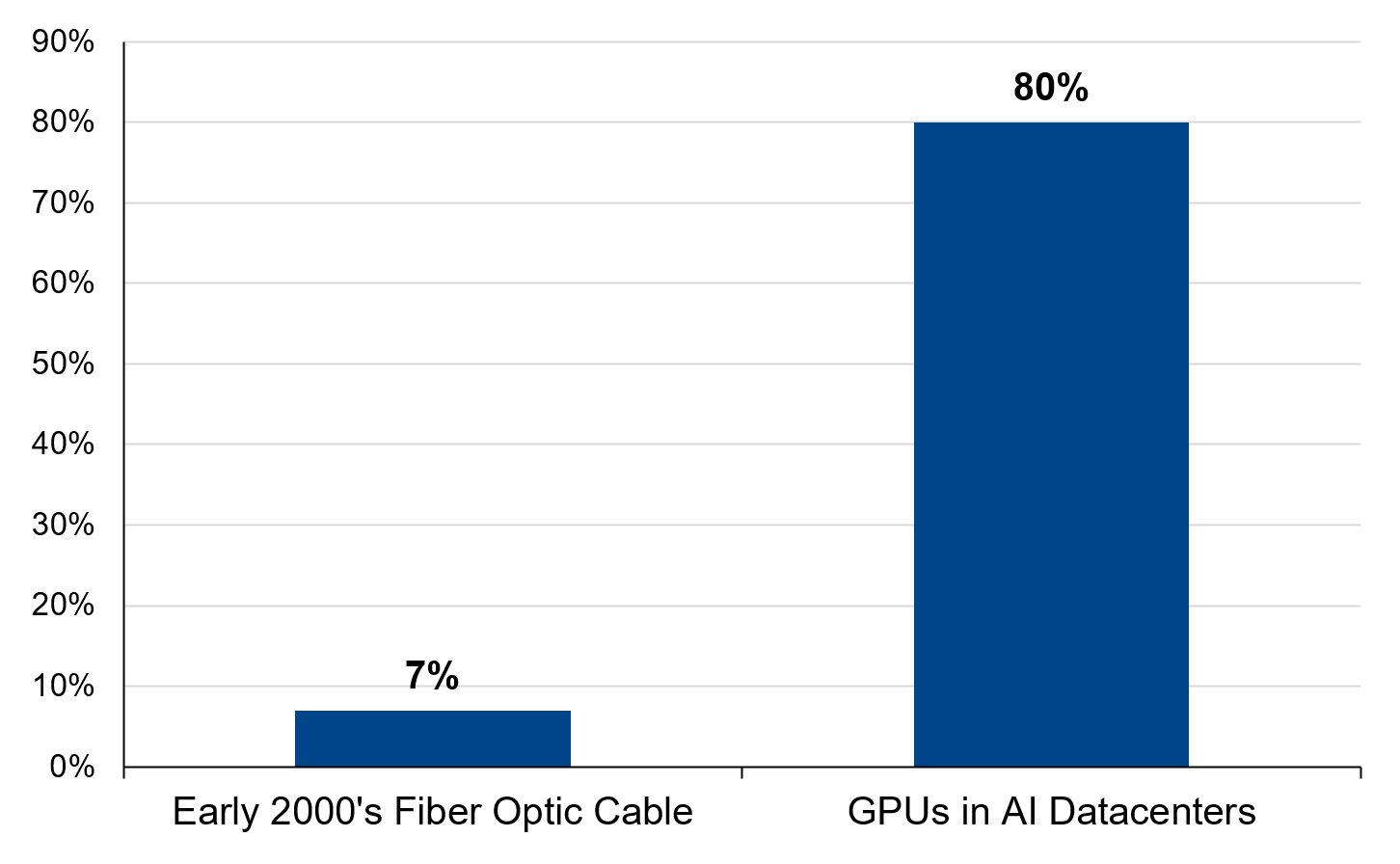

From an investment perspective, JP Morgan researchers caution that every major capital-expenditure cycle carries the risk of overbuilding. But they qualify that during the dot-com boom, only about 7% of the global fibre-optic network was utilised at its peak, leaving years of excess capacity while the current AI cycle looks very different. Data-centre vacancy rates are at record lows, utilisation is close to 80%, and demand for compute continues to outstrip supply. More data has been created in the past three years than in all of previous history, and AI workloads are expanding rapidly.

Even so, they add that caution is not misplaced. “The scale of spending is enormous, the pace unprecedented and some assumptions around ROI—like the useful lives of assets—remain open questions. History reminds us that enthusiasm can run ahead of reality. Yet so far, today’s players are far better capitalised than those of the dot-com era, AI monetisation is underway and the risk of overbuilding seems limited in the near term. As this story unfolds, investors should focus on selectivity, leaning into active management to separate transformative winners from bubbly valuations,” they conclude.

Here are a few other trends we covered in Mint Tech Talk in 2025

AI patents comprise more than 25% of all tech patents filed in India

How enterprises are shifting focus from digital to AI-first strategies

AI Tool of the Week

By AI & Beyond, with Jaspreet Bindra and Anuj Magazine

The AI hack we unlocked today is based on Google Gemini’s new capability—mini-apps in Gemini (powered by Opal, Google’s experimental framework for building interactive applications).

What problem do mini-apps in Gemini solve?

Traditional AI chatbots give you answers, but you still have to do the work. Ask for a budget breakdown? You get text that you copy into Excel. Need a project tracker? You manually recreate it in another tool. Want to compare options? You’re building tables yourself.

This creates friction in user experience. AI understands what you need, but you’re stuck doing manual work to make it usable.

Mini-apps in Gemini close this gap by creating ready-to-use, interactive tools right in your chat. No more copying, pasting, or rebuilding in other apps.

How to access: Go to gemini.google.com → Click the beaker icon (top right) → Enable “Gems with mini apps”

Mini-apps can help you:

Create interactive tools instantly: Generate calculators, planners, trackers, and games without leaving the chat.

Build functional prototypes: Test ideas with working apps before investing in full development.

Collaborate more effectively: Share interactive tools that teammates can use, not just read.

Example: Suppose you’re launching a new product and need a streamlined workflow for your marketing team. Here’s how mini-apps in Gemini help:

Click “New Gem”: Start creating your custom mini-app from the Gems section

Describe what you need: “Create an app that evaluates product features, generates marketing content ideas, creates vision boards, and outlines next steps”

Gemini builds the workflow: You get a multi-step interactive app with sections for Product Name, Description, Content Generation, Vision Board Creation, and HTML rendering

Use it immediately: Your team inputs product details, and the app automatically generates marketing content, visual concepts, and actionable next steps

Remix and refine: Click “Remix” to modify steps, add fields, or customize the workflow for your specific needs

What makes this special?

No technical skills needed: Build functional apps through simple conversation

Everything in one place: From request to working tool without switching platforms

Instant iterations: Modify apps by simply asking—no manual editing required

Note: The tools and analysis featured in this section demonstrated clear value based on our internal testing. Our recommendations are entirely independent and not influenced by the tool creators..

AI BITS AND BYTES

‘AI should remember your life’: Sam Altman on OpenAI’s next big bet

OpenAI CEO Sam Altman has suggested that the next major leap in AI will not come from sharper reasoning abilities, but from something far more fundamental: memory. Speaking on a recent podcast with technology journalist Alex Kantrowitz, Altman outlined a future in which AI systems are able to retain and learn from vast amounts of personal data across an individual’s lifetime, fundamentally reshaping the idea of a digital personal assistant.

According to Altman, today’s AI tools are already strong at reasoning tasks, but they fall short when it comes to long-term recall. Humans, he argued, remain the best personal assistants because they understand context and nuance, yet they struggle to remember details consistently.

OpenAI strengthens ChatGPT safeguards for users aged 13 to 17: Here’s how

OpenAI has announced changes to its Model Spec, the internal rulebook that guides how its AI systems behave, with a new and explicit focus on protecting teenagers who use ChatGPT. The San Francisco-based company said the updated guidelines put the safety of users aged 13 to 17 above all other objectives, including the long-standing goal of maximising helpfulness and user freedom. The move comes amid growing scrutiny of how generative AI tools affect younger users.

How an AI assistant can help you protect your digital footprint

While consent managers under the DPDPA make preferences easier to track and manage, the burden remains on users to understand privacy risks. An AI privacy assistant can bridge this gap by explaining rights and consequences in a language and context that the user understands.

9 Viral AI prompts on Grok decode your personality without horoscopes

An AI expert’s viral X post claims chatbots like Grok can replace astrologers using powerful birth date prompts. The nine shared prompts promise deep self-analysis, personality insights and future growth lessons without horoscopes or tarot cards. Here are the prompts for you to try.

The ‘smart doctor’ at the heart of India’s digital health push

The government is moving to deploy a clinical decision support system across nearly 70,000 public and private hospitals in India to standardise the quality of care and reduce medical errors. The tool, dubbed “smart doctor”, has been developed by AIIMS New Delhi and will be rolled out under the government’s flagship Ayushman Bharat Digital Mission across all Indian hospitals.

Hope you folks have a great weekend, and your feedback will be much appreciated — just reply to this mail, and I’ll respond.

Edited by Rashmi Sanyal. Produced by Tushar Deep Singh.